Chatbot-ing our way out of a support crisis

Has your customer support ever felt like a re-enactment of the Sisyphus myth?

40 opened tickets.

You breathe in, roll up your sleeves: “Hold my coffee mug, I’m going in!”

5 hours later

15 opened tickets.

You self-five yourself for all the issues solved amidst your ad-hoc to-dos. Content, you log out, grab a snack, and go to bed.

Next morning.

40 opened tickets.

“What the #$@&%*!”

A few months ago, support at our SaaS became this shared Sisyphean reality. However hard we worked at it, tickets just… kept stacking up.

I had read about “big” startup support horror stories before.

“Jeez, we’re lucky support isn’t such a pain at Snipcart!” I remember thinking in 2013.

I used to be convinced it would never be an issue for our small, humble SaaS.

But that humble SaaS scaled a bit. As support intensified, things started to change.

Development velocity plummeted. Marketing initiatives slowed down. Morale dropped a notch. I started understanding why entire products & blogs were built around support.

We had to do something.

After rounds of fruitless investigations, we came up with an idea: build a support chatbot.

Context & expectations

Our gut feeling was that most answers users wanted — especially technical ones — were already in our documentation. It was like people weren’t actually reading through it. Now, we knew it needed a little love, but we subconsciously overlooked that fact.

“Why oh why aren’t our supposedly tech-savvy users reading all that info we’ve written for them!” did we chant in harmony.

To top it off, we were still hooked on a grandfathered UserVoice plan with sluggish UX.

So our plan was simple:

- Switch to Intercom.

- Build a chatbot using their API to redirect users to the documentation.

Our expectations:

- Use automation and a new system to reduce support efforts and regain development velocity.

Now, we knew chatbots weren’t mature enough to replace our whole support infrastructure. With that in mind, we didn’t try to reinvent the chatbot wheel or inject hardcore machine learning in our chatbot. We just went for the lowest hanging fruit and built what we fondly call “our dumb bot.”

First, we tagged our documentation (a Node.js app) entries with relevant keywords.

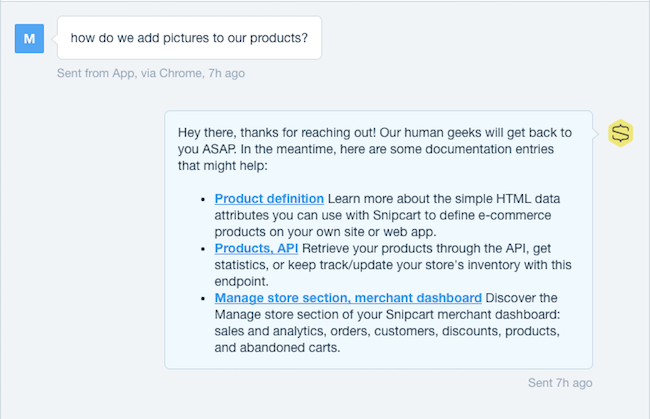

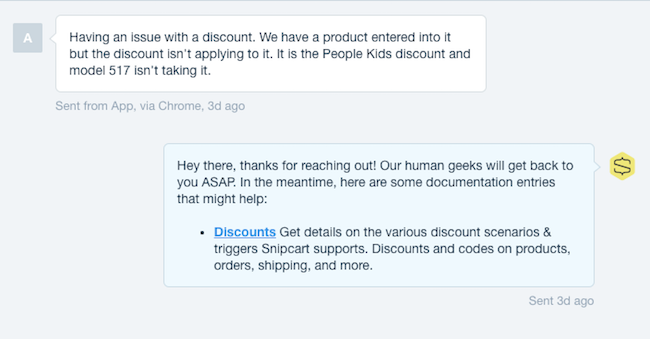

Then, we built the bot — a webhook handler in Node/Express. Its function was simple: run semantic analysis on the Intercom tickets, match them with relevant entries, and serve them in Intercom.

If the bot didn’t find matching entries, it’d simply greet the user and inform him of an incoming human response.

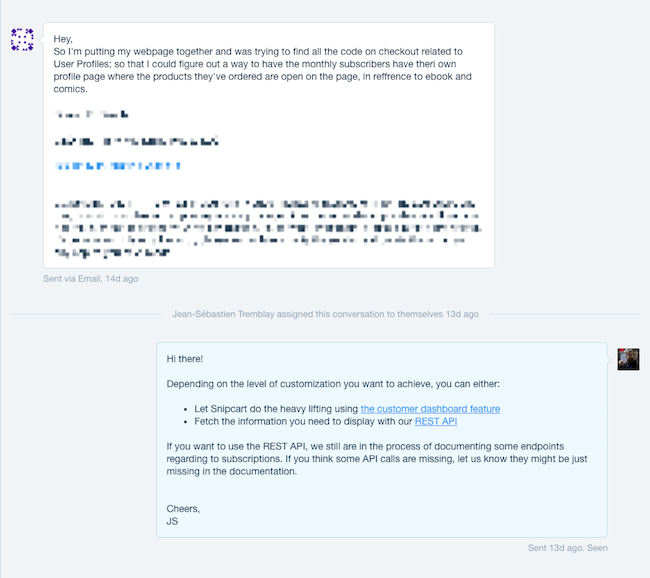

As you can see, we were doing the typical bot to human handoff. If our dumb bot couldn’t answer, we’d take over. In other words, we simply applied a layer of automation on top of support to improve both ours and our customers’ lives.

Injecting these answers inside the channel we thought customers often used without consulting the documentation was key for us. First, it answered expectations of “good and fast service” with valuable answers and not just “please wait” placeholders. Second, it actualized the help potential in lingering our static documentation.

You’re probably thinking: “sure, cool stuff, but did it work?”

Well, it kind of did, actually!

Results: making sense of numbers & words

We tracked the following results over a four months period.

Support metrics

- 6.5% tickets were fully answered by the bot (only human input = friendly goodbye, closing ticket)

- 10% tickets were partially answered by the bot (quick human follow-up)

- 83.5% tickets weren’t answered by the bot (human solved issue)

Merging the first two points, we see that our dumb bot got us a 16.5% reduction in support efforts. Which, for the time we spent on it, is great!

Engagement metrics

We tracked behaviour and results in Google Analytics with simple UTM parameters on our bot-suggested links:

- 5% of leads not engaged with product yet converted into active users following a bot conversation (>2x our usual conversion rate)

- Bounce rate on site sessions triggered by bot links was low, around 48%

- Users consulted 3.5 pages/session & spent over 5 minutes on site

More importantly, this data tells us that one of our assumptions — the “laziness” of users — was wrong. They either found an answer and kept looking, or looked without finding one. Considering a majority got back to us on Intercom, the latter seems to have happened more often. The insight here? Bot redirections weren’t solving a majority of user issues.

Qualitative results

After focusing on hard metrics, we turned to softer data: conversations. We analyzed over 100 individual threads with users and noted our observations.

While this qualitative approach was indeed time-consuming, it was well worth it. It drove us to three conclusions:

- Users, more often than not, did try to find answers on their own in the documentation. Many times, the answers they were looking for simply weren’t there, or weren’t easy to find.

- There was a clear lack of “structured content” in our documentation (cookbooks, recipes, workflows).

- The developer-first, flexible approach to e-commerce we champion inevitably increases the complexity and diversity of support questions. And it doesn’t refrain merchants for asking direct questions themselves!

Wrapping up: what happened exactly?

Influenced by the droves of chatbot articles sweeping the web, we opted for an impulsive, trendy shortcut to solve a deep problem. While we often praise focusing on the basics, we did the opposite. That being said, our bot did help us out (and still does). However, this whole episode was a clear reminder to practice what we preach and give a whole lotta love to our documentation.

I like to think our bot, while not having all the conversations we would’ve wanted it to have with customers, at least had a very useful one for us:

DUMB-BOT: Guys, you’re not giving me enough well-articulated information to feed your users. You need to refactor your docs.

SNIPCART TEAM: You’re right, dumb bot. We’ll get on it.

All in all, I’d say we sort of led a half-experiment with this support crisis. A full one would’ve been to refactor our help content (docs) too. But I’m glad we drank the chatbot Kool-Aid a little on this one. Because our chatbot experiment, while it did help with support a bit, also reminded us that:

- There are content gaps and architectural issues to address in our docs.

- With a product as flexible as ours, some conversations will inevitably need — and benefit from — personalized, “human” input.

Focusing on these will allow us to better serve interested and active users.

And these people are just vital to a SaaS’ success. They’re way down your conversion funnel — they’ve taken action, signaled interest, and often started using your product. Either their money’s out in their hand, or already in your pocket. As a SaaS business, you can’t afford to not give these users the care they deserve.

So should you build a chatbot for your SaaS support?

Well… maybe?

I think it’s important to state that 16.5% (our numbers) reduction in tickets can mean a lot of time and money saved. Especially for mid to big-sized SaaS. So a chatbot experiment might be well worth your time, especially if your product usage is more streamlined than ours!

Also if, like us, you host most of your help content, building a bot and analyzing its interactions might help leverage this content and improve it. There are cool platform-specific bots on the market, like Intercom’s Operator, but they require help content to be hosted on the platform’s third party. A few reasons why we prefer hosting it ourselves:

- Control branding & visual experience

- Control depth and format of content

- Improve SEO (bigger site architecture, more thematic authority on-site, inbound links pointing to own domain, technical KW targeting, etc.)

Anyhow, I’d love to hear other thoughts and experiences on leveraging bots for your SaaS or business!

I’ll be following the comments section closely, should you want to participate. :)

If you’ve enjoyed this piece, share it somewhere online. That’d be cool.

This post first appeared on Chatbots Magazine.